After my tentative Polymath proposal, there definitely seems to be enough momentum to start a discussion “officially”, so let’s see where it goes. I’ve thought about the question of whether to call it Polymath11 (the first unclaimed number) or Polymath12 (regarding the polynomial-identities project as Polymath11). In the end I’ve gone for Polymath11, since the polynomial-identities project was listed on the Polymath blog as a proposal, and I think the right way of looking at things is that the problem got solved before the proposal became a fully-fledged project. But I still think that that project should be counted as a Polymathematical success story: it shows the potential benefits of opening up a problem for consideration by anybody who might be interested.

Something I like to think about with Polymath projects is the following question: if we end up not solving the problem, then what can we hope to achieve? The Erdős discrepancy problem project is a good example here. An obvious answer is that we can hope that enough people have been stimulated in enough ways that the probability of somebody solving the problem in the not too distant future increases (for example because we have identified more clearly the gap in our understanding). But I was thinking of something a little more concrete than that: I would like at the very least for this project to leave behind it an online resource that will be essential reading for anybody who wants to attack the problem in future. The blog comments themselves may achieve this to some extent, but it is not practical to wade through hundreds of comments in search of ideas that may or may not be useful. With past projects, we have developed Wiki pages where we have tried to organize the ideas we have had into a more browsable form. One thing we didn’t do with EDP, which in retrospect I think we should have, is have an official “closing” of the project marked by the writing of a formal article that included what we judged to be the main ideas we had had, with complete proofs when we had them. An advantage of doing that is that if somebody later solves the problem, it is more convenient to be able to refer to an article (or preprint) than to a combination of blog comments and Wiki pages.

With an eye to this, I thought I would make FUNC1 a data-gathering exercise of the following slightly unusual kind. For somebody working on the problem in the future, it would be very useful, I would have thought, to have a list of natural strengthenings of the conjecture, together with a list of “troublesome” examples. One could then produce a table with strengthenings down the side and examples along the top, with a tick in the table entry if the example disproves the strengthening, a cross if it doesn’t, and a question mark if we don’t yet know whether it does.

A first step towards drawing up such a table is of course to come up with a good supply of strengthenings and examples, and that is what I want to do in this post. I am mainly selecting them from the comments on the previous post. I shall present the strengthenings as statements rather than questions, so they are not necessarily true.

Strengthenings

1. A weighted version.

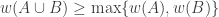

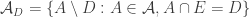

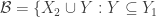

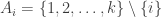

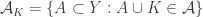

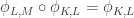

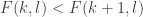

Let be a function from the power set of a finite set

to the non-negative reals. Suppose that the weights satisfy the condition

for every

and that at least one non-empty set has positive weight. Then there exists

such that the sum of the weights of the sets containing

is at least half the sum of all the weights.

Note that if all weights take values 0 or 1, then this becomes the original conjecture. It is possible that the above statement follows from the original conjecture, but we do not know this (though it may be known).

This is not a good question after all, as the deleted statement above is false. When is 01-valued, the statement reduces to saying that for every up-set there is an element in at least half the sets, which is trivial: all the elements are in at least half the sets. Thanks to Tobias Fritz for pointing this out.

2. Another weighted version.

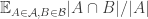

Let be a function from the power set of a finite set

to the non-negative reals. Suppose that the weights satisfy the condition

for every

and that at least one non-empty set has positive weight. Then there exists

such that the sum of the weights of the sets containing

is at least half the sum of all the weights.

Again, if all weights take values 0 or 1, then the collection of sets of weight 1 is union closed and we obtain the original conjecture. It was suggested in this comment that one might perhaps be able to attack this strengthening using tropical geometry, since the operations it uses are addition and taking the minimum.

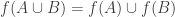

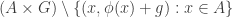

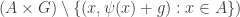

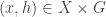

3. An “off-diagonal” version.

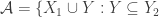

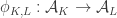

Tom Eccles suggests (in this comment) a generalization that concerns two set systems rather than one. Given set systems and

, write

for the union set

. A family

is union closed if and only if

. What can we say if

and

are set systems with

small? There are various conjectures one can make, of which one of the cleanest is the following: if

and

are of size

and

is of size at most

, then there exists

such that

, where

denotes the set of sets in

that contain

. This obviously implies FUNC.

Simple examples show that can be much smaller than either

or

— for instance, it can consist of just one set. But in those examples there always seems to be an element contained in many more sets. So it would be interesting to find a good conjecture by choosing an appropriate function

to insert into the following statement: if

,

, and

, then there exists

such that

.

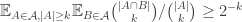

4. A first “averaged” version.

Let be a union-closed family of subsets of a finite set

. Then the average size of

is at least

.

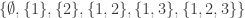

This is false, as the example shows for any

.

5. A second averaged version.

Let be a union-closed family of subsets of a finite set

and suppose that

separates points, meaning that if

, then at least one set in

contains exactly one of

and

. (Equivalently, the sets

are all distinct.) Then the average size of

is at least

.

This again is false: see Example 2 below.

6. A better “averaged” version.

In this comment I had a rather amusing (and typically Polymathematical) experience of formulating a conjecture that I thought was obviously false in order to think about how it might be refined, and then discovering that I couldn’t disprove it (despite temporarily thinking I had a counterexample). So here it is.

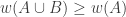

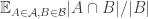

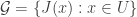

As I have just noted (and also commented in the first post), very simple examples show that if we define the “abundance” of an element

to be

, then the average abundance does not have to be at least

. However, that still leaves open the possibility that some kind of naturally defined weighted average might do the job. Since we want to define the weighting in terms of

and to favour elements that are contained in lots of sets, a rather crude idea is to pick a random non-empty set

and then a random element

, and make that the probability distribution on

that we use for calculating the average abundance.

A short calculation reveals that the average abundance with this probability distribution is equal to the average overlap density, which we define to be

where the averages are over . So one is led to the following conjecture, which implies FUNC: if

is a union-closed family of sets, at least one of which is non-empty, then its average overlap density is at least 1/2.

A not wholly pleasant feature of this conjecture is that the average overlap density is very far from being isomorphism invariant. (That is, if you duplicate elements of , the average overlap density changes.) Initially, I thought this would make it easy to find counterexamples, but that seems not to be the case. It also means that one can give some thought to how to put a measure on

that makes the average overlap density as small as possible. Perhaps if the conjecture is true, this “worst case” would be easier to analyse. (It’s not actually clear that there is a worst case — it may be that one wants to use a measure on

that gives measure zero to some non-empty set

, at which point the definition of average overlap density breaks down. So one might have to look at the “near worst” case.)

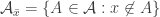

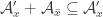

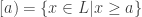

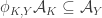

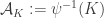

7. Compressing to an up-set.

This conjecture comes from a comment by Igor Balla. Let be a union-closed family and let

. Define a new family

by replacing each

by

if

and leaving it alone if

. Repeat this process for every

and the result is an up-set

, that is, a set-system

such that

and

implies that

.

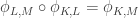

Note that each time we perform the “add if you can” operation, we are applying a bijection to the current set system, so we can compose all these bijections to obtain a bijection

from

to

.

Suppose now that are distinct sets. It can be shown that there is no set

such that

and

. In other words,

is never a subset of

.

Now the fact that is an up-set means that each element

is in at least half the sets (since if

then

). Moreover, it seems hard for too many sets

in

to be “far” from their images

, since then there is a strong danger that we will be able to find a pair of sets

and

with

.

This leads to the conjecture that Balla makes. He is not at all confident that it is true, but has checked that there are no small counterexamples.

Conjecture. Let be a set system such that there exist an up-set

and a bijection

with the following properties.

- For each

,

.

- For no distinct

do we have

.

Then there is an element that belongs to at least half the sets in

.

The following comment by Gil Kalai is worth quoting: “Years ago I remember that Jeff Kahn said that he bet he will find a counterexample to every meaningful strengthening of Frankl’s conjecture. And indeed he shot down many of those and a few I proposed, including weighted versions. I have to look in my old emails to see if this one too.” So it seems that even to find a conjecture that genuinely strenghtens FUNC without being obviously false (at least to Jeff Kahn) would be some sort of achievement. (Apparently the final conjecture above passes the Jeff-Kahn test in the following weak sense: he believes it to be false but has not managed to find a counterexample.)

Examples and counterexamples

1. Power sets.

If is a finite set and

is the power set of

, then every element of

has abundance 1/2. (Remark 1: I am using the word “abundance” for the proportion of sets in

that contain the element in question. Remark 2: for what it’s worth, the above statement is meaningful and true even if

is empty.)

Obviously this is not a counterexample to FUNC, but it was in fact a counterexample to an over-optimistic conjecture I very briefly made and then abandoned while writing it into a comment.

2. Almost power sets

This example was mentioned by Alec Edgington. Let be a finite set and let

be an element that does not belong to

. Now let

consist of

together with all sets of the form

such that

.

If , then

has abundance

, while each

has abundance

. Therefore, only one point has abundance that is not less than 1/2.

A slightly different example, also used by Alec Edgington, is to take all subsets of together with the set

. If

, then the abundance of any element of

is

while the abundance of

is

. Therefore, the average abundance is

When is large, the amount by which

exceeds 1/2 is exponentially small, from which it follows easily that this average is less than 1/2. In fact, it starts to be less than 1/2 when

(which is the case Alec mentioned). This shows that Conjecture 5 above (that the average abundance must be at least 1/2 if the system separates points) is false.

3. Empty set, singleton, big set.

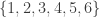

Let be a positive integer and take the set system that consists of the sets

and

. This is a simple example (or rather class of examples) of a set system for which although there is certainly an element with abundance at least 1/2 (the element

has abundance 2/3), the average abundance is close to 1/3. Very simple variants of this example can give average abundances that are arbitrarily small — just take a few small sets and one absolutely huge set.

4. Using strong divisibility sequences.

I will not explain these in detail, but just point you to an interesting comment by Uwe Stroinski that suggests a number-theoretic way of constructing union-closed families.

I will continue with methods of building union-closed families out of other union-closed families.

5. Duplicating elements.

I’ll define this process formally first. Let be a set of size

and let

be a collection of subsets of

. Now let

be a collection of disjoint non-empty sets and define

to be the collection of all sets of the form

for some

. If

is union closed, then so is

.

One can think of as “duplicating” the element of

times. A simple example of this process is to take the set system

and let

and

. This gives the set system 3 above.

Let us say that if

for some suitable set-valued function

. And let us say that two set systems are isomorphic if they are in the same equivalence class of the symmetric-transitive closure of the relation

. Equivalently, they are isomorphic if we can find

and

such that

.

The effect of duplication is basically that we can convert the uniform measure on the ground set into any other probability measure (at least to an arbitrary approximation). What I mean by that is that the uniform measure on the ground set of , which is of course

, gives you a probability of

of landing in

, so has the same effect as assigning that probability to

and sticking with the set system

. (So the precise statement is that we can get any probability measure where all the probabilities are rational.)

If one is looking for an averaging argument, then it would seem that a nice property that such an argument might have is (as I have already commented above) that the average should be with respect to a probability measure on that is constructed from

in an isomorphism-invariant way.

It is common in the literature to outlaw duplication by insisting that separates points. However, it may be genuinely useful to consider different measures on the ground set.

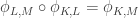

6. Union-sets.

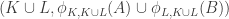

Tom Eccles, in his off-diagonal conjecture, considered the set system, which he denoted by , that is defined to be

. This might more properly be denoted

, by analogy with the notation

for sumsets, but obviously one can’t write it like that because that notation already stands for something else, so I’ll stick with Tom’s notation.

It’s trivial to see that if and

are union closed, then so is

. Moreover, sometimes it does quite natural things: for instance, if

and

are any two sets, then

, where

is the power-set operation.

Another remark is that if and

are disjoint, and

and

, then the abundance of

in

is equal to the abundance of

in

.

7. A less obvious construction method.

I got this from a comment by Thomas Bloom. Let and

be disjoint finite sets and let

and

be two union-closed families living inside

and

, respectively, and assume that

and

. We then build a new family as follows. Let

be some function from

to

. Then take all sets of one of the following four forms:

- sets

with

;

- sets

with

;

- sets

with

;

- sets

with

.

It can be checked quite easily (there are six cases to consider, all straightforward) that the resulting family is union closed.

Thomas Bloom remarks that if consists of all subsets of

and

consists of all subsets of

, then (for suitable

) the result is a union-closed family that contains no set of size less than 3, and also contains a set of size 3 with no element of abundance greater than or equal to 1/2. This is interesting because a simple argument shows that if

is a set with two elements in a union-closed family then at least one of its elements has abundance at least 1/2.

Thus, this construction method can be used to create interesting union-closed families out of boring ones.

Thomas discusses what happens to abundances when you do this construction, and the rough answer is that elements of become less abundant but elements of

become quite a lot more abundant. So one can’t just perform this construction a few times and end up with a counterexample to FUNC. However, as Thomas also says, there is plenty of scope for modifying this basic idea, and maybe good things could flow from that.

I feel as though there is much more I could say, but this post has got quite long, and has taken me quite a long time to write, so I think it is better if I just post it. If there are things I wish I had mentioned, I’ll put them in comments and possibly repeat them in my next post.

I’ll close by remarking that I have created a wiki page. At the time of writing it has almost nothing on it but I hope that will change before too long.

January 29, 2016 at 3:59 pm |

Something I should have mentioned is methods of creating smaller union-closed families out of given ones. For example, one can remove an element from and identify sets that become identical. Or one can take an equivalence relation on

and identify sets that become identical. Or one can take an equivalence relation on  and restrict to just those sets that are unions of equivalence classes — this would be a “quotient” family.

and restrict to just those sets that are unions of equivalence classes — this would be a “quotient” family.

This sort of construction could conceivably be useful in an inductive proof. At any rate, in any proof we are free to assume that all such families contain elements with abundance at least 1/2, though that seems too weak to use as an inductive hypothesis — probably induction has more chance of working with attempted averaging arguments.

January 29, 2016 at 4:38 pm

That use of the word “quotient” wasn’t very intelligent. What I should have said is that a subset of gives rise to a quotient family (since you have to identify elements of

gives rise to a quotient family (since you have to identify elements of  ) while a quotient set of

) while a quotient set of  gives rise to a subfamily of

gives rise to a subfamily of  . So some sort of duality is operating.

. So some sort of duality is operating.

January 29, 2016 at 5:26 pm |

Logistical comment: have you considered getting a programmer (perhaps a volunteer) to write some more dedicated platform for hosting these discussions? The major weakness of having them in WordPress comments as I see it is that even when voting on comments is possible you can’t sort by votes (as you can on MathOverflow, say). Also there’s an annoying limit to how much you can nest comments, at least as far as I recall.

I think a platform something like Reddit but with LaTeX support would do a better job of bubbling up the best comments to everyone’s attention, to make it easier to follow the discussions casually.

January 29, 2016 at 10:03 pm

The r/math reddit [1] lists some scripts/extensions that one can use there to get the faux-TeX to display properly. I’ve not spent any appreciable time there or tried out the suggestions.

[1] https://www.reddit.com/r/math

January 31, 2016 at 4:20 pm

I made a polymath subreddit: https://www.reddit.com/r/polymathpoject/. I’m not sure I’m allowed to do this, but in the spirit of rapid prototyping I went ahead. I’m happy to hand it over to whoever wants to moderate it.

From what I understand, it might be best to have a subreddit for each polymath project or each “research community” (e.g. I have several email threads going with a bunch of collaborators, but we could use a private subreddit).

This bookmarklet will run mathjax on reddit: http://web.mit.edu/jcalz/Public/Reddit/mathjaxbookmarklet.html

February 4, 2016 at 2:48 pm

The discourse platform would already be a major step up: https://www.discourse.org/

January 29, 2016 at 6:17 pm |

Concerning the first weighted generalization “1.”, the assumption for all

for all  is equivalent to

is equivalent to  for all

for all  , which is simply monotonicity of

, which is simply monotonicity of  . So when

. So when  is the normalized counting measure of a set system, this assumption requires the set system to be upper closed! This is clearly not what we’re looking for, and therefore we might want to abandon the first weighted generalization in favour of the second, which indeed specializes to FUNC.

is the normalized counting measure of a set system, this assumption requires the set system to be upper closed! This is clearly not what we’re looking for, and therefore we might want to abandon the first weighted generalization in favour of the second, which indeed specializes to FUNC.

January 29, 2016 at 6:31 pm

Good point — I’ll edit the post later.

January 30, 2016 at 9:46 am |

Suppose we want to prove that the average overlap density of a union-closed family is always at least 1/2. An obvious idea is to try to use induction. That enables us to assume that the average overlap density of

is always at least 1/2. An obvious idea is to try to use induction. That enables us to assume that the average overlap density of  is at least 1/2 for every

is at least 1/2 for every  . But it actually allows us to assume more. We can define the average overlap density with respect to any probability measure we like on

. But it actually allows us to assume more. We can define the average overlap density with respect to any probability measure we like on  , and the duplication procedure shows that we are forced to prove the result for all such measures, so we end up with a stronger inductive hypothesis that does not come at the cost of a stronger result that needs to be proved with the help of that hypothesis.

, and the duplication procedure shows that we are forced to prove the result for all such measures, so we end up with a stronger inductive hypothesis that does not come at the cost of a stronger result that needs to be proved with the help of that hypothesis.

So the inductive hypothesis would be something like that for every and every probability measure

and every probability measure  on

on  that gives non-zero probability to all the elements of

that gives non-zero probability to all the elements of  , the average overlap density of

, the average overlap density of  with respect to

with respect to  is at least 1/2.

is at least 1/2.

So far so good. Where I don’t have an idea is in how to use the hypothesis that is union closed. It has to come in somewhere. But perhaps the right way to work that out is to try to carry out the above proof for an arbitrary set system

is union closed. It has to come in somewhere. But perhaps the right way to work that out is to try to carry out the above proof for an arbitrary set system  (which of course makes the result completely false) and then see what extra hypotheses are needed to make it work. Maybe one can identify some minimal hypotheses and the conjecture that those hold for union-closed families.

(which of course makes the result completely false) and then see what extra hypotheses are needed to make it work. Maybe one can identify some minimal hypotheses and the conjecture that those hold for union-closed families.

Of course, this average-overlap-density conjecture may very well be false, but in that case maybe pursuing an attempt like the above could lead to a way of constructing a counterexample.

January 30, 2016 at 11:23 am

Here’s a brief indication of why the average overlap density of might be hard to express in terms of the average overlap densities of the

might be hard to express in terms of the average overlap densities of the  . The average overlap density of

. The average overlap density of  is roughly speaking the average overlap of a random set

is roughly speaking the average overlap of a random set  with a random set

with a random set  given that both

given that both  and

and  contain

contain  . When we average that over

. When we average that over  (possibly with some weighted average) we are still calculating some kind of average overlap density but now we are conditioning on the event that

(possibly with some weighted average) we are still calculating some kind of average overlap density but now we are conditioning on the event that  and

and  both contain some random element

both contain some random element  . But one would expect this to increase the expected density of the overlap of

. But one would expect this to increase the expected density of the overlap of  and

and  . Thus, knowing that the average overlap densities of the

. Thus, knowing that the average overlap densities of the  are large does not seem sufficient to obtain that the average overlap density of

are large does not seem sufficient to obtain that the average overlap density of  is large.

is large.

It may be possible to get round this problem somehow. For example, the information that all the sets in contain

contain  should enable us to say something stronger about the average overlap density of

should enable us to say something stronger about the average overlap density of  than that it is at least 1/2. Indeed, there are two natural set systems to consider here: one is

than that it is at least 1/2. Indeed, there are two natural set systems to consider here: one is  and the other is

and the other is  . If

. If  has average overlap density at least

has average overlap density at least  , then

, then  should have average overlap density a bit bigger than this.

should have average overlap density a bit bigger than this.

January 30, 2016 at 11:34 am |

I’ve done some more experimentation with Igor’s conjecture (7 above), this time following Tim’s suggestion of starting with a random upward-closed family and successively removing random abundant elements from random sets until one loses the desired property (or finds a counterexample). There are several tweakable parameters here, of course. But it seems difficult with this method to get the maximum abundance of the smaller set anywhere near while maintaining the property.

while maintaining the property.

January 30, 2016 at 5:15 pm

That’s not quite correct: it is easy to get the maximum abundance close to by taking

by taking  close to

close to  where

where  is the size of the ground set. Then

is the size of the ground set. Then  and

and  approach the power set, and the maximum abundance approaches

approach the power set, and the maximum abundance approaches  . But I’ve found no counterexamples to the conjecture.

. But I’ve found no counterexamples to the conjecture.

January 30, 2016 at 12:34 pm |

In the hunt for a strengthening amenable to induction, I really want to combine strengthenings 3 (off-diagonal) and 6 (average overlap density). It seems to me that there are two issues that immediately arise with an induction argument where we split the cube into the sets contain x and the sets not containing x:

1) The property of “having an abundant element” doesn’t play nicely with the split. If and

and  have an abundant element, we know little about abundance in

have an abundant element, we know little about abundance in  .

. and

and  , the condition of “union-closed” becomes complicated – in fact, it becomes a few off-diagonal statements like

, the condition of “union-closed” becomes complicated – in fact, it becomes a few off-diagonal statements like  .

.

2) When we split into

2) is the problem that led me to want an off-diagonal conjecture. And 1) could be solved by a suitable averaging strengthening, like average overlap density.

January 30, 2016 at 9:22 pm

I had the same thought regarding problem 2) a while back, but I didn’t know how to deal with problem 1). It would indeed be interesting to engineer an “off-diagonal” and “averaged” conjecture that we could apply induction to.

January 31, 2016 at 10:16 am

Maybe a way to tackle the complication of the union-closed property when one splits is to do so head on. That is, perhaps one could take a subset and for each

and for each  let

let  . Then we could try to work out what properties the set systems

. Then we could try to work out what properties the set systems  must have. Actually, maybe that’s not so hard: I think we get that

must have. Actually, maybe that’s not so hard: I think we get that  . That looks as though it might conceivably be a condition one could work with, perhaps by generalizing the original conjecture a lot. Note that if we set

. That looks as though it might conceivably be a condition one could work with, perhaps by generalizing the original conjecture a lot. Note that if we set  , then

, then  if

if  and

and  otherwise, so in that case the condition above is just the condition that

otherwise, so in that case the condition above is just the condition that  is union closed.

is union closed.

January 31, 2016 at 3:20 pm

One idea along these lines that doesn’t work out: I wondered whether being small meant that one of the average overlap densities

being small meant that one of the average overlap densities  and

and  had to be large.

had to be large.

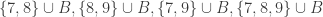

But that’s not true, even for and

and  union-closed. If

union-closed. If  and

and  , we can take

, we can take  and

and  . The

. The  , but both the average overlap densities are very small if the

, but both the average overlap densities are very small if the  are much smaller than the

are much smaller than the  .

.

January 31, 2016 at 9:35 am |

I have now started to generate intersection closed set systems via strong divisibility sequences. While it was clear from the beginning that we cannot expect to get easy counter examples here I thought that the larger the sets get the closer to a counter example they go. This is false and the reason is quite obvious. I just do not have enough sets in my set system and thus run inside the regime where FUNC is known to be true.

To give you an idea of what is going on. If I take the sets of prime divisors of the first 10,000 numbers I get a system with 6,083 sets with 1,229 elements. Let denote the set system and

denote the set system and  the set of primes used. The concept of average overlap densitiy should translate like

the set of primes used. The concept of average overlap densitiy should translate like

If that is true the above set system has average overlap density > 99.8%.

How to proceed? Let me take out (from ) the sets that contain elements with the lowest frequency as long as this frequency is less or equal to 1/2. Then I check whether I get a counter example or the empty set. If this is not the case I repeat. Does such an (inductive) approach have a chance to achieve something? In our situation we are ultimately talking about taking numbers from sets and so there might be some (very small) chance that we can describe the algorithm by some sort of sifting procedure.

) the sets that contain elements with the lowest frequency as long as this frequency is less or equal to 1/2. Then I check whether I get a counter example or the empty set. If this is not the case I repeat. Does such an (inductive) approach have a chance to achieve something? In our situation we are ultimately talking about taking numbers from sets and so there might be some (very small) chance that we can describe the algorithm by some sort of sifting procedure.

January 31, 2016 at 3:36 pm |

[…] discuss together what directions to peruse. It is a pleasure to mention that in parallel Tim Gowers is running polymath11 aimed for resolving Frankl’s union closed conjecture. Both questions were offered along with […]

January 31, 2016 at 9:22 pm |

Another potential variant/weakening of FUNC:

Let be a union-closed family of

be a union-closed family of  sets, and define the abundancy of a set of elements to be the proportion of sets of

sets, and define the abundancy of a set of elements to be the proportion of sets of  which contain all elements of that set.

which contain all elements of that set.

FUNC says that some set of size has abundancy at least

has abundancy at least  , which trivially implies that, for every

, which trivially implies that, for every  , there exists some

, there exists some  -set which has abundancy at least

-set which has abundancy at least  .

.

Let -FUNC be this generalised statement, so that regular FUNC is

-FUNC be this generalised statement, so that regular FUNC is  -FUNC, and we conjecture that

-FUNC, and we conjecture that  -FUNC holds for all union closed families with at least

-FUNC holds for all union closed families with at least  sets. Note that the other extremal case, when

sets. Note that the other extremal case, when  , is trivially true.

, is trivially true.

There are a number of possible ways to fiddle with this setup to produce weaker, more asymptotic, statements. For example, how about the following family of conjectures.

Fix a decreasing function![F:\mathbb{N}\to (0,1]](https://s0.wp.com/latex.php?latex=F%3A%5Cmathbb%7BN%7D%5Cto+%280%2C1%5D&bg=ffffff&fg=333333&s=0&c=20201002) . For every

. For every  and union-closed family of at least

and union-closed family of at least  sets some

sets some  -set has abundancy at least

-set has abundancy at least  .

.

FUNC is then equivalent to this statement with . As a weakening, can this conjecture be established with any function such that

. As a weakening, can this conjecture be established with any function such that  as

as  ?

?

February 1, 2016 at 8:46 pm

I like this -FUNC conjecture. It seems a natural generalization, and a little experimentation with random union-closed families suggests it stands a chance of being true.

-FUNC conjecture. It seems a natural generalization, and a little experimentation with random union-closed families suggests it stands a chance of being true.

February 2, 2016 at 8:11 am

Just as for strengthening 6 above, one could recklessly strengthen this further to the conjecture that if you choose a random with

with  and a random

and a random  -element subset

-element subset  , the number of

, the number of  containing

containing  is at least

is at least  on average. This is equivalent to the statement that

on average. This is equivalent to the statement that  .

.

February 1, 2016 at 6:36 am |

Does anyone know where to find the article by Sarvate and Renaud on the conjecture? Or, more specifically, what is the counter-example they gave to 3 element ground set case?

February 1, 2016 at 8:05 am

I could not find it also but I believe the example you look for is also on Poonen’s paper in the non-theorems section

February 1, 2016 at 8:57 am

Tried to post the example but something went wrong. It is also quoted here: http://mathoverflow.net/q/228124

February 1, 2016 at 9:46 am

Since the ground set in this example has seven elements, it can’t be the set system that Thomas Bloom obtains by putting together two power sets using construction method 7. It would be good to have it clarified exactly what the relationship between these two examples is. In particular, is the exact example of Sarvate and Renaud (as listed on mathoverflow) obtainable by a nice construction method?

February 1, 2016 at 10:13 am

Which example do you mean when you say it has a ground set of 7 elements? Is this in Poonen’s paper?

The survey article (on p.15) describes a general construction for a UC family with a -element set none of whose elements are abundant. I haven’t checked whether this gives the Sarvate–Renaud example for

-element set none of whose elements are abundant. I haven’t checked whether this gives the Sarvate–Renaud example for  (but it does give an example with 9 elements).

(but it does give an example with 9 elements).

February 1, 2016 at 12:42 pm

Oops, sorry, I meant the one on Mathoverflow, which has nine elements in its ground set.

February 1, 2016 at 4:05 pm

Actually, never mind, I worked that one out. I’m now working on the idea of induction on the original problem. If we have a family of union closed sets F with one element x in at least half of them, we can extend this to a different set G such that if S is in F then either S or SU{y}, or both, are in G, and make sure that G is union closed. All that needs to be shown is that the number of sets in G containing both x and y is greater than the number of sets containing neither of them, and the induction will be complete. Unfortunately, I don’t think that last step will be that easy to prove (or even true).

February 1, 2016 at 1:29 pm |

Here’s another idea at a generalization that somebody else might be able to shoot down. As explained in the survey, one way to look for a proof of FUNC is to find and an injective map from

and an injective map from  to

to  .

.

Now I wonder whether it is always possible to find such an injection which in addition preserves unions, i.e. which satisfies

which in addition preserves unions, i.e. which satisfies  . It’s easy to see that this is indeed the case in the examples 1. to 3. of the original post, is unaffected by 5. (duplicating elements), and also invariant under the union-sets construction 6., at least in the case of disjoint ground sets.

. It’s easy to see that this is indeed the case in the examples 1. to 3. of the original post, is unaffected by 5. (duplicating elements), and also invariant under the union-sets construction 6., at least in the case of disjoint ground sets.

In terms of the lattice formulation of the survey, I’m asking whether one can always find a meet-preserving map . The proof of Theorem 3, which shows that FUNC holds for all lower semimodular lattices, proceeds via constructing the injection

. The proof of Theorem 3, which shows that FUNC holds for all lower semimodular lattices, proceeds via constructing the injection  . This trivially preserves meets.

. This trivially preserves meets.

February 1, 2016 at 1:42 pm

Also the Sarvate-Renaud example has a union-preserving injection: the union-presering map injects

injects  into

into  .

.

Inspired by this, here’s a further strengthening: does there always exist and

and  with

with  such that the map

such that the map

is injective?

Now this certainly looks much too strong to be true, doesn’t it…?

February 1, 2016 at 3:14 pm

It certainly does. But it would be very nice to have a counterexample, given that the recipes in the post don’t work. (Of course, I’d settle for a proof …)

February 1, 2016 at 3:51 pm

Do we know a small example of a lattice that is not lower semimodular ?

February 1, 2016 at 4:12 pm

The smallest nonmodular lattice is : https://en.wikipedia.org/wiki/Lattice_%28order%29#/media/File:N_5_mit_Beschriftung.svg

: https://en.wikipedia.org/wiki/Lattice_%28order%29#/media/File:N_5_mit_Beschriftung.svg isn’t semimodular either. But it’s also not a counterexample to my strong conjecture. In its lattice version, the conjecture would state that there exists a join-irreducible element

isn’t semimodular either. But it’s also not a counterexample to my strong conjecture. In its lattice version, the conjecture would state that there exists a join-irreducible element  and a lattice element

and a lattice element  such that

such that  is an injection from

is an injection from  into

into  . To see this for

. To see this for  , just take

, just take  and

and  as labelled in the picture. (I’m using the notation from the survey, where

as labelled in the picture. (I’m using the notation from the survey, where  is the lattice and

is the lattice and  the upper set.)

the upper set.)

It’s easy to see

I don’t know any less trivial examples. As far as I understand, semimodularity of a lattice (very) roughly means that its Hasse diagram contains plenty of diamonds and that it permits a ‘dimension function’ stratifying the Hasse diagram into ‘levels’. So it might help to come up with lattices that don’t have these features.

February 1, 2016 at 5:17 pm

An attempt at a counterexample. In Polymath spirit I’ll start writing this comment before finding out whether it has any chance of working. Let’s just take all subsets of of size

of size  , where

, where  is some fairly small number like 2. Let’s try

is some fairly small number like 2. Let’s try  . Without loss of generality

. Without loss of generality  . Then there are

. Then there are  sets in

sets in  that do not contain

that do not contain  . Hmm, this fails miserably since we can take

. Hmm, this fails miserably since we can take  . What on earth made me think it could possibly work?

. What on earth made me think it could possibly work?

I wanted to exploit the fact that the union of two very large sets is almost always . Here’s a second attempt. Take a random bunch of quite a lot, but not too many, sets of size

. Here’s a second attempt. Take a random bunch of quite a lot, but not too many, sets of size  , and let

, and let  consist of their complements and all unions of their complements. Let

consist of their complements and all unions of their complements. Let  be the collection of

be the collection of  -sets.

-sets.

For a set to satisfy the conclusion of the strengthened conjecture, we need in particular that

to satisfy the conclusion of the strengthened conjecture, we need in particular that  is a union of sets of the form

is a union of sets of the form  for every

for every  . That is, we need

. That is, we need  to be an intersection of sets in

to be an intersection of sets in  for each

for each  . For the injectivity property we need the sets

. For the injectivity property we need the sets  to be distinct.

to be distinct.

Suppose, in an attempt to make that hard to achieve, we take to be a Steiner triple system. That is, every pair of elements of

to be a Steiner triple system. That is, every pair of elements of  is contained in exactly one set in

is contained in exactly one set in  . If we take

. If we take  to be a singleton

to be a singleton  , then some of the sets

, then some of the sets  are pairs, and no pair is an intersection of sets in

are pairs, and no pair is an intersection of sets in  . If we take

. If we take  to be a pair

to be a pair  , then at most one set will contain

, then at most one set will contain  , so there will be many sets that contain

, so there will be many sets that contain  but not

but not  , so again there will be many sets

, so again there will be many sets  that are pairs, and so not intersections of sets in

that are pairs, and so not intersections of sets in  .

.

What if is quite a lot bigger? Then there is a significant danger that we will be able to find some

is quite a lot bigger? Then there is a significant danger that we will be able to find some  and elements

and elements  such that

such that  and

and  both belong to

both belong to  .

.

That’s not exactly a proof, but I think it might be an idea worth pursuing.

February 1, 2016 at 5:22 pm

Maybe it’s easier than I thought. We have problems if there is ever a set such that

such that  , since then

, since then  is a pair. But in that case if we look at all the sets that contain some element

is a pair. But in that case if we look at all the sets that contain some element  , we find that their union is all of

, we find that their union is all of  (since we must cover each pair

(since we must cover each pair  ) and they all intersect

) and they all intersect  either in a set of size 2 or an empty set. Also, they are disjoint apart from their intersection at

either in a set of size 2 or an empty set. Also, they are disjoint apart from their intersection at  . So we get exactly the situation I described when I asked about what happens when

. So we get exactly the situation I described when I asked about what happens when  is quite a lot bigger.

is quite a lot bigger.

So I think this is a counterexample to the ludicrously strong conjecture, but as always there’s at least a 40% chance that I’ve made a silly mistake, as I have not checked this argument carefully.

February 1, 2016 at 9:11 pm

I suppose I should also check whether this example disposes of the first conjecture. So let . Then looking at complements, we would like to find an injection from the sets in

. Then looking at complements, we would like to find an injection from the sets in  that contain

that contain  to the sets in

to the sets in  that don’t contain

that don’t contain  , and this injection needs to preserve intersections.

, and this injection needs to preserve intersections.

Now the intersection of two sets that contain is just

is just  , so if

, so if  , then this says that we need to find

, then this says that we need to find  sets in

sets in  none of which contain

none of which contain  and all of which have the same intersection. That intersection can’t be

and all of which have the same intersection. That intersection can’t be  because we don’t have

because we don’t have  elements to play with. It can’t be a singleton because we don’t have

elements to play with. It can’t be a singleton because we don’t have  elements to play with. It can’t be a pair because no pairs occur as intersections. And it can’t be a triple because no triple occurs as an intersection (of distinct sets in

elements to play with. It can’t be a pair because no pairs occur as intersections. And it can’t be a triple because no triple occurs as an intersection (of distinct sets in  , that is).

, that is).

So I think this example shows that even the first conjecture is false, which suggests that finding a “structural” proof may be hard.

February 2, 2016 at 8:48 am

Wow! I’ve tried to walk through these arguments in the case of the Fano plane as the smallest nontrivial Steiner triple system. Confusingly, I seem to have found that the Fano plane *does* satisfy even the ludicrously strong conjecture. Following your idea, the intersection-closed set system consists of the 7 Fano lines, the 7 vertices and the empty set. When I say ‘set’ in the following paragraph, I’m referring to one of these.

In terms of the standard way of drawing the Fano plane, suppose that the rare element is the central vertex. Then there are four sets that contain

is the central vertex. Then there are four sets that contain  , namely the three interior lines and

, namely the three interior lines and  itself. By taking the intersection with the circular Fano line, we map these four sets to sets that don’t contain

itself. By taking the intersection with the circular Fano line, we map these four sets to sets that don’t contain  in an injective manner, namely to three vertices plus the empty set. This verifies the ludicrously strong conjecture. Or am I just being dense…?

in an injective manner, namely to three vertices plus the empty set. This verifies the ludicrously strong conjecture. Or am I just being dense…?

February 2, 2016 at 12:15 pm

The density was entirely mine. I was unthinkingly assuming that each triple in would have to map to a triple in

would have to map to a triple in  , but it can map to a singleton, as your counter-counterexample shows. However, I think my basic idea may not be completely dead. I’ll start a separate comment thread below and have another try.

, but it can map to a singleton, as your counter-counterexample shows. However, I think my basic idea may not be completely dead. I’ll start a separate comment thread below and have another try.

February 1, 2016 at 3:17 pm |

I agree that, put like that, your second conjecture seems too strong to be true, but I can’t find a counterexample.

if this strengthened version were true, however, it would fix the gap in the argument I had on the last blog post, so it would imply the strengthened FUNC with arbitrary weight functions (i.e. version 2 in this blog post).

February 1, 2016 at 4:27 pm

This was, of course, intended to be a reply to Tobias Fritz’s comment above.

February 2, 2016 at 12:45 pm |

Here is another attempt to disprove Tobias Fritz’s strengthenings of FUNC. I shall take complements and discuss the following equivalent problems.

1. Let be an intersection-closed set system. Then there exists

be an intersection-closed set system. Then there exists  and an intersection-preserving injection

and an intersection-preserving injection  from

from  to

to  .

.

2. As 1 but with the added conclusion that is of the form

is of the form  for some fixed

for some fixed  .

.

I thought I had shown that a Steiner triple system is a counterexample to 1 (and therefore 2), but my proof was wrong, because I accidentally assumed that triples had to map to triples. Now I want to explore what happens if I take a quadruple system — that is, a collection of 4-sets that covers each 2-set exactly once. (If this basic approach works, I think it should be possible to get a similar proof that doesn’t require results from design theory by taking suitable random collections of -sets and taking their intersections. But I may as well try to keep the proof simple by relying on whatever I want — even Keevash’s big theorem if necessary.)

-sets and taking their intersections. But I may as well try to keep the proof simple by relying on whatever I want — even Keevash’s big theorem if necessary.)

What does look like in this case? Well, we know that each pair that contains

look like in this case? Well, we know that each pair that contains  is in exactly one 4-set from

is in exactly one 4-set from  , so this tells us that the sets

, so this tells us that the sets  with

with  of size 4 form a partition of

of size 4 form a partition of  . Also,

. Also,  contains all sets of the form

contains all sets of the form  , and the singleton

, and the singleton  .

.

I would now like to argue that if preserves intersections, then

preserves intersections, then  cannot map to the empty set. So let’s assume for a contradiction that it does.

cannot map to the empty set. So let’s assume for a contradiction that it does.

Observe first that if is a quadruple in

is a quadruple in  , then the pairs

, then the pairs  map to disjoint non-empty sets. Moreover, these sets must be subsets of

map to disjoint non-empty sets. Moreover, these sets must be subsets of  . Since

. Since  must be a quadruple if it has size at least 3, it must be a quadruple. (This is the key new ingredient to the argument.)

must be a quadruple if it has size at least 3, it must be a quadruple. (This is the key new ingredient to the argument.)

At this point, letting , we have

, we have  quadruples in

quadruples in  that must map to disjoint quadruples in

that must map to disjoint quadruples in  , which requires that

, which requires that  , which ain’t gonna happen. (I think we can allow ourselves not to worry about the case

, which ain’t gonna happen. (I think we can allow ourselves not to worry about the case  .)

.)

So I think we now have that must map to a non-empty set

must map to a non-empty set  . Again let’s think about what must happen to the quadruples in

. Again let’s think about what must happen to the quadruples in  . For any two of these, the intersection is

. For any two of these, the intersection is  , so we need to find

, so we need to find  sets in

sets in  such that any two of them intersect in

such that any two of them intersect in  .

.

If is a singleton

is a singleton  and all the sets we take are quadruples, then we don’t have enough elements (since we can’t use

and all the sets we take are quadruples, then we don’t have enough elements (since we can’t use  or

or  for the remaining three elements). What happens if some quadruple

for the remaining three elements). What happens if some quadruple  in

in  maps to a pair

maps to a pair  that contains

that contains  ? That looks to me like a problem because the three pairs in

? That looks to me like a problem because the three pairs in  that contain

that contain  must map to distinct subsets of

must map to distinct subsets of  that properly contain

that properly contain  , and we don’t have that. So I think quadruples have to go to quadruples still.

, and we don’t have that. So I think quadruples have to go to quadruples still.

Let me just check whether that is a general lemma. My claim is that (without assuming that goes to the empty set) quadruples have to go to quadruples. The idea is that if

goes to the empty set) quadruples have to go to quadruples. The idea is that if  is a quadruple in

is a quadruple in  , then the images of the sets

, then the images of the sets  and

and  (omitting the set brackets because I’m getting tired of writing them) have to go to distinct subsets of

(omitting the set brackets because I’m getting tired of writing them) have to go to distinct subsets of  and if

and if  then we don’t have an intersection-preserving bijection that does the job.

then we don’t have an intersection-preserving bijection that does the job.

However, I’m not out of the woods quite yet. I think I’ve shown that can’t be empty or a singleton, but what if it is a pair? Ah, that is completely impossible, since I can find at most one quadruple in

can’t be empty or a singleton, but what if it is a pair? Ah, that is completely impossible, since I can find at most one quadruple in  that contains

that contains  .

.

OK, as cranks like to say, if you think this is wrong, then can you find a counter-counterexample? That is, can you exhibit a Steiner -system

-system  and an intersection-preserving injection from some

and an intersection-preserving injection from some  to

to  ?

?

February 2, 2016 at 2:39 pm

I would perfectly agree with this argument, if it wasn’t for the statement “Also, contains all sets of the form

contains all sets of the form  “. How is this compatible with working with a Steiner

“. How is this compatible with working with a Steiner  -system…?

-system…?

In fact, I suspect that the projective plane over the field with three elements is a counter-counterexample. It’s a Steiner

over the field with three elements is a counter-counterexample. It’s a Steiner  -system since any two points lie on a unique line, and all lines are

-system since any two points lie on a unique line, and all lines are  ‘s, so that they contain four points. Now for any point

‘s, so that they contain four points. Now for any point  , the resulting

, the resulting  is also a

is also a  , meaning that it consists of four lines that only intersect in

, meaning that it consists of four lines that only intersect in  . Now choose any other line that doesn’t contain

. Now choose any other line that doesn’t contain  . Intersecting the sets in

. Intersecting the sets in  with this line results in the desired map

with this line results in the desired map  , which apparently verifies the ludicrously strong conjecture in this case.

, which apparently verifies the ludicrously strong conjecture in this case.

For what it’s worth, there’s an illustration of on p.42 of this beautiful book: http://www.uwyo.edu/moorhouse/handouts/incidence_geometry.pdf

on p.42 of this beautiful book: http://www.uwyo.edu/moorhouse/handouts/incidence_geometry.pdf

The gist of why this seems to work is probably that doesn’t have any interesting order structure: all the nontrivial sets in there are incomparable. In other words, the lattice for which we’re probing the conjecture has a height of only 4 (bottom, top, a level of atoms and a level of coatoms), and this seems to be too small.

doesn’t have any interesting order structure: all the nontrivial sets in there are incomparable. In other words, the lattice for which we’re probing the conjecture has a height of only 4 (bottom, top, a level of atoms and a level of coatoms), and this seems to be too small.

Thinking along these lines, it might be better to consider lattices of height 5, such as the face lattice of a 3-dimensional polytope. Consider e.g. the dodecahedron: simply by visual inspection, I have the impression that the ludicrously strong conjecture can’t possibly hold for its face lattice, but I haven’t yet found a rigorous and complete proof.

Of course, any pointers to errors in my reasoning will be very welcome.

February 2, 2016 at 2:41 pm

To clarify my question about the sets of the form : I don’t understand how those are supposed to arise as intersections of blocks in the Steiner system.

: I don’t understand how those are supposed to arise as intersections of blocks in the Steiner system.

February 2, 2016 at 3:04 pm

Oh dear, I need to get my brain properly into gear before writing out long arguments. This time I somehow fooled myself into thinking that the pairs were all in because they are all covered, despite making crucial use of the fact that they were not intersections of sets in the system later on in the argument.

because they are all covered, despite making crucial use of the fact that they were not intersections of sets in the system later on in the argument.

I still feel that something along these lines ought to work though. Back to the drawing board.

February 2, 2016 at 3:26 pm |

I’m not necessarily going to finish this comment, but let me see whether I can prove that a Steiner -system

-system  works for some

works for some  . For now I won’t specify the values of

. For now I won’t specify the values of  and

and  .

.

Since each -set is covered exactly once, the number of sets in

-set is covered exactly once, the number of sets in  is

is  . Given any

. Given any  -set

-set  , let

, let  contain

contain  and let

and let  . Then

. Then  must be covered by some set

must be covered by some set  , which gives us two sets in

, which gives us two sets in  that intersect in

that intersect in  . So the intersection closure of

. So the intersection closure of  is, if I’m not much mistaken (a highly non-trivial assumption)

is, if I’m not much mistaken (a highly non-trivial assumption)  together with all sets of size at most

together with all sets of size at most  . Let this intersection closure be

. Let this intersection closure be  .

.

Now let be an intersection-preserving injection from

be an intersection-preserving injection from  to

to  .

.

From my last, failed, attempt, it seems like a good idea to try to prove that the image of a set of size must have size

must have size  . So let

. So let  have size

have size  .

.

We know that if belongs to

belongs to  , then

, then  . Therefore, we have an injection from subsets of

. Therefore, we have an injection from subsets of  of size at most

of size at most  that contain

that contain  to proper subsets of

to proper subsets of  . There are at least

. There are at least  subsets of the first kind. If

subsets of the first kind. If  , then there are at most

, then there are at most  sets of the second kind. So this is impossible if

sets of the second kind. So this is impossible if  , something we can easily arrange to happen by choosing appropriate

, something we can easily arrange to happen by choosing appropriate  and

and  .

.

As a sanity check, let me see what happens with and

and  . There I get

. There I get  versus

versus  and the condition does not hold, which is reassuring …

and the condition does not hold, which is reassuring …

So now I know (in the “know until Tobias points out my stupid mistake” sense) that the -sets in

-sets in  have to map to

have to map to  -sets in

-sets in  . Moreover, their images must all contain the set

. Moreover, their images must all contain the set  .

.

I’ll think for a moment and then continue.

February 2, 2016 at 3:56 pm

I’m now going to make an additional assumption about , which is that for each

, which is that for each  we can find sets in

we can find sets in  with union

with union  and with all intersections equal to

and with all intersections equal to  . This doesn’t follow from the definition of a design, but my guess is that it is achievable if one runs Keevash’s argument carefully or modifies it slightly.

. This doesn’t follow from the definition of a design, but my guess is that it is achievable if one runs Keevash’s argument carefully or modifies it slightly.

In that case, the arguments I gave in my wrong proof show that cannot be empty or a singleton, since in both cases one has a system of disjoint sets with cardinalities that add up to something too big. (In the first case we get

cannot be empty or a singleton, since in both cases one has a system of disjoint sets with cardinalities that add up to something too big. (In the first case we get  disjoint subsets of

disjoint subsets of  of size

of size  , and in the second case we get that number of disjoint subsets of a set

, and in the second case we get that number of disjoint subsets of a set  of size

of size  .)

.)

But I still need to rule out having cardinality greater than 1, which is no longer trivial since we have sets in the system of sizes up to

having cardinality greater than 1, which is no longer trivial since we have sets in the system of sizes up to  .

.

Let’s suppose that is a set of size 2. There are

is a set of size 2. There are  sets of size

sets of size  that contain

that contain  , and these must inject to sets in

, and these must inject to sets in  that contain

that contain  . If they map to sets of size

. If they map to sets of size  , then we have problems because

, then we have problems because  . They can’t map to sets of size less than

. They can’t map to sets of size less than  because all their subsets need to map nicely to subsets as well.

because all their subsets need to map nicely to subsets as well.

The one case I don’t yet see how to rule out is that some sets of size map to sets of size

map to sets of size  . But I think that should be impossible. I’ll post this comment and think further.

. But I think that should be impossible. I’ll post this comment and think further.

February 2, 2016 at 4:03 pm

Ah, that’s easily shown to be impossible. If a set of size maps to a set of size

maps to a set of size  , then there is nowhere for any superset of size

, then there is nowhere for any superset of size  to map to.

to map to.

So I now have yet another argument that feels right but may well be wrong. In this case, I have made a further assumption about the Steiner system but (i) I think that systems satisfying this further assumption probably exist and (ii) if this proof is correct, then probably one can find another proof that avoids this extra assumption, which I made mainly out of laziness.

February 2, 2016 at 4:21 pm

I forgot to mention that if above has size bigger than 2, then things get even worse, so I think the argument is complete. However, I won’t feel happy about it unless someone OKs it.

above has size bigger than 2, then things get even worse, so I think the argument is complete. However, I won’t feel happy about it unless someone OKs it.

February 2, 2016 at 5:01 pm

Very nice! Now I don’t see anything wrong with the argument. As far as I can see, it should be possible to choose and

and  . So what is the smallest

. So what is the smallest  for which a

for which a  -Steiner system exists? The following webpage has examples with

-Steiner system exists? The following webpage has examples with  and

and  . Does anyone know interesting ways of constructing these?

. Does anyone know interesting ways of constructing these?

February 2, 2016 at 5:02 pm

Sorry, I forgot the link: https://www.ccrwest.org/cover/steiner.html

February 2, 2016 at 5:11 pm

Phew!

February 2, 2016 at 5:17 pm

I’ve just checked the example with and luckily it satisfies the extra condition, since it contains the sets {1,2,3,7,14}, {1,4,9,11,13}, {1,5,12,16,17} and {1,6,8,10,15} and is cyclically symmetric.

and luckily it satisfies the extra condition, since it contains the sets {1,2,3,7,14}, {1,4,9,11,13}, {1,5,12,16,17} and {1,6,8,10,15} and is cyclically symmetric.

February 2, 2016 at 5:27 pm

For another concrete example, projective 3-space over should work, with

should work, with  being the collection of projective planes (=Fano planes). I think that this would have parameters

being the collection of projective planes (=Fano planes). I think that this would have parameters  (three points determine a unique plane),

(three points determine a unique plane),  (size of the Fano plane), and

(size of the Fano plane), and  . Off the top of my head I’m not totally sure about the extra condition, though.

. Off the top of my head I’m not totally sure about the extra condition, though.

In any case, I’m glad that the conjecture seems to be resolved! But unfortunately, since the intersection-closed families constructed this way feel quite ‘bottom-heavy’, this doesn’t look like a good way to look for counterexamples to FUNC itself, or does it?

February 2, 2016 at 6:28 pm

I thought about that earlier today. I think it should probably be possible to prove that no Steiner -system can work and have done a few calculations with that in mind. It would probably be a worthwhile thing to complete them, just to make sure. At some point soon I’ll try to say where I got to.

-system can work and have done a few calculations with that in mind. It would probably be a worthwhile thing to complete them, just to make sure. At some point soon I’ll try to say where I got to.

I also wonder whether it might be possible to prove a weakening of FUNC where one assumes that the set system has quite a lot of symmetry — an obvious hypothesis being that its symmetry group acts transitively on the ground set. Is there some easy argument that does this case?

The two questions are loosely related, since a design, though not necessarily symmetric, is at least highly balanced. Maybe one could prove a fairly general result that FUNC is true for sufficiently balanced systems, for some suitable notion of “balanced”.

February 3, 2016 at 6:49 am

Forget about projective 3-space (in my last comment): it’s not even a Steiner system! It’s true that every triple of points is contained in a plane, but this plane is not unique if the points are collinear…

February 3, 2016 at 12:35 pm

If we want to take this a bit further still, we could try to find examples in which not even an injective and /monotone/ map exists.

exists.

Tim’s argument already goes quite some way to proving this: the only case for which he actually needs the preservation of unions–as opposed to mere monotonicity–is in the paragraph where he shows that can’t be empty or a singleton.

can’t be empty or a singleton.

February 2, 2016 at 5:38 pm |

On sort of a whim, I decided to program a naive random union-closed family generator and see if it led me to anything interesting. My initial process is very simple, but it does work. I start by choosing a universe (i.e. choosing an , and having everything live in the integers from

, and having everything live in the integers from  to

to  ), a probability parameter

), a probability parameter  , and a number

, and a number  of sets. Then I create these

of sets. Then I create these  sets in the following (possibly too simple) way: each integer up to

sets in the following (possibly too simple) way: each integer up to  has probability

has probability  of being included. Finally, I take the closure under unions of the family.

of being included. Finally, I take the closure under unions of the family.

I’ve learned a few things, and some thoughts have jumped out at me. I think I’d like to carry on this experiment forward a bit and implement checkers on some of the proposed strengthenings mentioned above [if possible].

But one thing is very obvious to me — this is not a good way of generating “interesting” union-closed families, and almost always the resulting families have over half of the elements as being in at least half of the sets. I’m not quite sure what to change yet, but it’ll be hanging around my thoughts.

February 2, 2016 at 6:48 pm

I agree that it would be very interesting to try to come up with a random model that produces “promising” examples. One feature I would expect such a model to have is that if you take a minimal generating set , then there are many coincidences amongst the unions of the

, then there are many coincidences amongst the unions of the  , so that in some sense we don’t get too many large sets. This seems unlikely to happen when the

, so that in some sense we don’t get too many large sets. This seems unlikely to happen when the  are chosen purely randomly, but perhaps there is some clever way of choosing a random object and then converting it deterministically into a “low-dimensional” system, or something like that.

are chosen purely randomly, but perhaps there is some clever way of choosing a random object and then converting it deterministically into a “low-dimensional” system, or something like that.

February 3, 2016 at 11:00 am

To be a little more explicit about this, let’s suppose that is a generating set for a set-system

is a generating set for a set-system  . That is,

. That is,  consists of all unions (including the empty union) that can be formed out of the

consists of all unions (including the empty union) that can be formed out of the  .

.

Suppose now that all those unions are distinct. In that case, it is trivial that has an element in half the sets. Indeed, we have

has an element in half the sets. Indeed, we have  different unions, and each

different unions, and each  is involved in half of them, so every element of every

is involved in half of them, so every element of every  is in at least half the unions.

is in at least half the unions.

So to have any hope of creating a counterexample to FUNC, one must find a generating set with the property that many unions coincide.

Can we be slightly more precise about this? For each set , write

, write  for the set

for the set  . And let’s say that

. And let’s say that  if

if  . Then the number of sets in

. Then the number of sets in  that contain

that contain  is the number of equivalence classes that contain a representative

is the number of equivalence classes that contain a representative  with

with  . And FUNC is satisfied if for some

. And FUNC is satisfied if for some  this is at least half the total number of equivalence classes.

this is at least half the total number of equivalence classes.

It’s worth making the trivial remark that there exist equivalence relations for which no such exists. For example, if each

exists. For example, if each  is the singleton

is the singleton  , we could define

, we could define  and

and  to be equivalent if they are either equal or both have size at least

to be equivalent if they are either equal or both have size at least  .

.

Actually, I’ve just noticed that this equivalence relation can occur. Let . Then any two distinct

. Then any two distinct  have union

have union  , so each

, so each  is contained in precisely two sets in

is contained in precisely two sets in  .

.

So what I needed to say earlier was not just that there should be many coincidences, but also that the sets should be small. To put it another way, I’ve just given a simple counterexample to the following strengthening of FUNC: every generating set of a union-closed family contains a set that is a subset of at least half the sets in .

.

So it seems as though what we’re looking for is lots of coincidences, but for those coincidences to occur for a “clever” reason, and not just because the sets we have taken are very large.

February 3, 2016 at 11:54 am

I’m not quite convinced that ‘to have any hope of creating a counterexample to FUNC, one must find a generating set with the property that many unions coincide’. In the extreme case where they’re all disjoint, we get (effectively) the power set, but is the power set a ‘worst case’ or a ‘best case’? In terms of minimizing the maximum abundance, it’s (conjecturally) optimal. It’s not clear (to me) whether counterexamples are more ‘likely’ in relatively small or large families (though there are results that rule out both very small and very large ones).

February 4, 2016 at 12:30 pm

In order to produce better random examples, how about starting with a fixed set of candidate generators , and then choosing randomly for every

, and then choosing randomly for every  independently whether it will become an actual generator or not? For example, one could take

independently whether it will become an actual generator or not? For example, one could take  to consist of the 3-sets associated to a finite group as suggested in the other thread: https://gowers.wordpress.com/2016/01/29/func1-strengthenings-variants-potential-counterexamples/#comment-154131

to consist of the 3-sets associated to a finite group as suggested in the other thread: https://gowers.wordpress.com/2016/01/29/func1-strengthenings-variants-potential-counterexamples/#comment-154131 and randomly select a bunch of 3-sets

and randomly select a bunch of 3-sets  (with each element in the corresponding copy of

(with each element in the corresponding copy of  , and

, and  ). At the moment I find it hard to say whether this is likely to generate better examples or not.

). At the moment I find it hard to say whether this is likely to generate better examples or not.

Similarly, one could choose some finite group

It has also been suggested to me by Benjamin Matschke to look for counterexamples to FUNC in terms of the probabilistic method. Along these lines, I’ve been wondering whether something like the above prescription for generating union-closed families would let us apply the Lovász local lemma to the family of events “ has abundance at least 1/2″ in order to show that there is positive probability for all

has abundance at least 1/2″ in order to show that there is positive probability for all  to have abundance less than 1/2.

to have abundance less than 1/2.

So far, I don’t know of an interesting way to guarantee the necessary independence conditions on these events (or negative correlation conditions for the lopsided LLL).

February 4, 2016 at 1:00 pm

It might be interesting to explore generating random ‘normalized’ examples. These are discussed in section 3.5 of the survey article: a separating union-closed family is normalized if it contains the empty set and its size is one more than the size of its ground set; and FUNC is equivalent to the conjecture that every normalized family includes a basis set of at least half this size (a basis set being one that’s not a union of two other sets in the family). For example, one could generate random candidates by repeatedly adding sets that are just under half the target size and forming the union-closure until one either gets a family of the right size, or exceeds it (in which case start again).

February 4, 2016 at 4:47 pm

Interesting idea! I have implemented essentially this here:

https://cloud.sagemath.com/projects/da1aee8f-701b-4aa8-bc45-1a0a5e3f0d15/files/random_examples.sagews

Feel free to play with the program and improve the (very amateurish) code.

After a couple of runs, the smallest separating union-closed families with generators of size less than half of the ground set are as follows: for a ground set of size 15, the smallest family found has size 22; for a ground set of size 21, it’s 28. For all other sizes of the cardinalities the gaps are larger.